Everyone from Hillary Clinton to President Barack Obama to academics and media critics have voiced concern over the spread of fake news following Donald Trump’s surprise election victory, even going so far as saying viral fictitious stories directly influenced the result.

Still reeling from her election defeat, Clinton in a speech in the nation’s capital framed fake news as an existential threat to democracy.

“It’s now clear that so-called ‘fake news’ can have real-world consequences,” she said. “This isn’t about politics or partisanship. Lives are at risk. Lives of ordinary people just trying to go about their days, to do their jobs, contribute to their communities.”

As a result of the chorus of complaints about how blatantly false stories purportedly impacted voters, Facebook said it would clamp down on fake news through partnerships with standard fact-checking organizations. Among the most-shared fugazy stories was one about Pope Francis endorsing Trump, an FBI agent investigating Clinton’s emails dying in a horrific murder-suicide and a purported quote from Trump about running as a Republican because that particular base is gullible.

Blame for Clinton’s loss has been spread far and wide: to Macedonia, where teenagers created phony articles and raked in thousands of dollars, to Russian hackers interfering in the election, and FBI Director James Comey, for announcing a new probe into Clinton’s email inquiry just weeks before the Nov. 8 election.

It’s no surprise that scapegoats abound following one of the most incendiary and hard-fought presidential campaigns in modern US history and that such speculation overshadowed coverage of Clinton’s apparent shortcomings.

Meanwhile, little attention has been paid to an ever-evolving problem gripping the internet: For all its democratizing prowess, it is saturated with so much information—from traditional media outlets, alternative voices, hyperpartisan blogs, and industry groups funneling propaganda through websites masquerading as legitimate public policy centers—that it’s become increasingly difficult to distinguish between factual news, scientific research and agenda-driven content, academics say.

“Every single topic that is pressing for a citizen to decide upon is being influenced by the information that comes across the digital transom,” Sam Wineburg, founder of the Stanford History Education Group at Stanford University, tells the Press. “You name an issue of public policy, whether it’s the legalization of marijuana, charter schools or attacks on sugary drinks, and the way that a citizen learns about how to form an opinion about those topics is typically through a digital medium, increasingly through a digital medium… And so this issue goes far beyond fake news and far beyond news literacy to implicate the most basic duties of citizenship in the 21st century.”

Research behind internet consumption and social media habits has delivered troubling conclusions. Among the findings: The majority of Americans fail to recognize the difference between marketing content and real news and nearly two-thirds of people are more likely to share articles on social media without actually reading the story—meaning they are forming opinions and endorsing stories based solely on headlines.

Yet ensuring that fake news is curtailed has emerged as a chief concern for elected officials, who have pressured the likes of Facebook and Google to tackle the problem. In doing so, they are calling upon the very same institutions that contribute to this age of hyperpartisan politics to clamp down on the ability for such stories to go viral.

So how can tech companies combat fake news without first addressing what appears to be the bigger issue: that news consumers, perhaps out of no fault of their own, are struggling to navigate the increasingly convoluted World Wide Web.

‘Pants On Fire’

Last year, Facebook supplanted Google as the largest driver of traffic to news sites in the United States. Much of the content is delivered to Facebook users in a way that is very much undemocratic: The social media giant’s algorithm decides, based on a user’s activity, which stories are more likely to spur engagement, and subsequently dumps related content into their news feed. It’s through this mosaic that Facebook is able to provide marketers with valuable data about specific users: What department stores they’re interested, where they do their grocery shopping, and their political ideology.

Facebook users who primarily get their news through the site do so in an echo chamber, which means they’re interacting with articles that already confirm their political beliefs, what psychologists call “confirmation bias.”

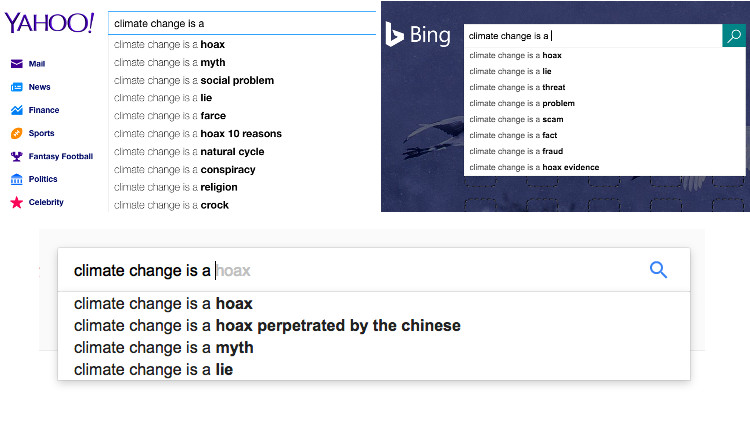

Google works very much the same way. By delivering content based on a user’s past searches, a climate change-skeptic, for example, is more likely to receive results that align with their viewpoint, says Michael Patrick Lynch, professor of philosophy and director of the Humanities Institute at the University of Connecticut.

“What the internet is good at doing is keeping track of our preferences and predicting our preferences, our desires,” Lynch tells the Press. “So in a sense, it’s a sort of desire machine and we get what we want from it. Of course, what we want and what’s true are two different things.”

Lynch says social media, like Google, has shaped the way we consume content. But sites like Twitter and Facebook are different in that users are not only collecting information, but also distributing it.

That means anyone—the teenagers in Macedonia or a high-powered lobbying group—can disseminate potentially faulty information to the masses—who can then share those posts with their friends on Facebook.

Some academics see this as problematic, to say the least.

“We are in a post-Gutenberg era where we have extended freedom of speech to anyone who can spend $20 on a web template and get a high-speed internet connection,” says Wineburg of Stanford, referring to 15th century goldsmith Johannes Gutenberg’s invention of the printing press and its introduction to Europe, launching a revolution in printing technology. “And so we have invented tools that are handling us, and not us them.”

What is true and what’s not took on greater significance in 2016 largely due to the frenzied presidential election. Trump, according to fact-checkers, was the candidate who was most disengaged with the truth. According to PolitiFact, 69-percent of his statements were considered “mostly false,” “false,” or “pants on fire.” His remarks were completely true only 4 percent of the time.

By contrast, Clinton’s truth scorecard had her telling an honest statement 25 percent of the time. Her combined false score was 26 percent, according to PolitiFact.

Traditional truths in the public arena were in such short supply in 20016 that Oxford Dictionary dubbed “post-truth” its “Word of the Year,” and PolitiFact struggled so much to agree on one particularly heinous mistruth that it settled on the broadly defined “fake news” as this year’s most egregious lie.

“Each year, PolitiFact awards a ‘Lie of the Year’ to take stock of a misrepresentation that arguably beats all others in its impact or ridiculousness. In 2016: where to start?” the site wrote. “With such a deep backlash against being truthful in political speech, no one person (though there are world-class frontrunners) and no one political claim perfectly stands out as the dust settles from an extraordinary campaign.”

Among the most-shared fake news stories in 2016 is one that emerged from a phony site called The Denver Guardian with a headline that screamed: “FBI Agent Suspected in Hillary Email Leaks Found Dead in Apparent Murder Suicide.”

A search of that headline on Facebook shows that the debunked story continues to live on the site. Thousands have either shared the article or commented, with one person writing: “I won’t be surprised if she put a hit on this FBI man and his family. This smells fishy and its (sic) stinks.”

What was actually fishy was the story itself—but not that it mattered.

When the administrator of one pro-Trump Facebook page that shared the story with the caption “You be the judge” was notified that the story was false, they bristled, offering an unapologetic retort.

“You think the Dems don’t lie by the truck load?” the administrator wrote. “And WIN because of it. I didn’t write the story. Don’t know if it’s true or false and don’t really care. They don’t fight fair why should we?”

Lynch describes two phenomenons at play: “implicitly recognizing bullshit…and then saying well the other side does it too” and “sharing [a story] and saying well, ‘I can’t tell what’s true but I’m going to share this anyway.’”

“What both of those phenomenons tend to reveal is that social media, and the sharing that goes on in social media, isn’t actually sometimes about reporting facts,” he adds. “It goes under that guise so people will share information under the idea that they’re sort-of fact-checking the mainstream media or they’re giving alternative views that looks as if they’re engaged in distributing factual information.”

To quantify just how viral fake stories were, a BuzzFeed analysis found that in the last three months of the presidential campaign the top-performing fake news stories inspired more engagement than articles from major news sources.

“I’m troubled that Facebook is doing so little to combat fake news,” Brendan Nyhan, a professor of political science at Dartmouth College, told BuzzFeed. “Even if they did not swing the election, the evidence is clear that bogus stories have incredible reach on the network. Facebook should be fighting misinformation, not amplifying it.”

Before acquiescing, Facebook CEO Mark Zuckerberg said the idea of fake news influencing the election was a “pretty crazy idea.”

A month later, Facebook issued a statement saying it would began flagging fake news stories and work with such fact-checking sites as PolitiFact and Snopes that would scrutinize the articles. Disputed stories would still appear in news feeds, but would come with a warning that states: “Disputed by 3rd Party Fact-Checkers.”

Facebook’s newfound dominance in the news distribution business played a direct role in the spreading of disturbingly false stores, says Lynch.

“Google searches are now not as much a source of information of news, according to some data, as Facebook is,” he says. “That’s what made the fake news phenomenon so painful, [it] was because most of the people were getting these fake news stories because of seeing them on Twitter and Facebook and other social media sites that’s distributed, not by some faceless entity, but by one of their friends.”

Much of what’s happening on the internet today, Lynch says, jives with what esteemed philosopher Marshall McLuhan said decades ago: “The medium is the message.”

“Whether it’s reading from a book or reading online or just talking to people, can shape the way in which we understand information.”

‘Zuckerberg Cannot Save Us’

Between January 2015 and June 2016, researchers from the Stanford History Education Group at Stanford University studied how adolescents analyze news, and found that more than 80 percent of middle school students were unable to differentiate between so-called “sponsored content”—online posts that appear to look like stories but are paid for by advertisers—and news stories.

“Some students even mentioned that it was sponsored content but still believed that it was a news article,” the study’s authors wrote. “This suggests that many students have no idea what ‘sponsored content’ means and that is something that must be explicitly taught as early as elementary school.”

Students are not alone. Stanford’s Wineburg, the study’s lead author, says an industry analysis revealed that 59 percent of adults had the same problem distinguishing the two.

The inability among the general public to decipher real news and advertisements is just part of the problem, Wineburg says. The way in which adults typically read digital content and print is the same: vertically. Meanwhile, fact checkers and researchers tend to consume digital content laterally—scrutinizing sources by opening multiple tabs and trying to pull the wool over a website’s true owner.

“The higher something is on a Google search they impute greater trustworthiness to it,” Wineburg says of college students, adding that this represents “a fundamental misunderstanding of search engine optimizers, the algorithms that Google uses.”

A society in which people are better equipped to analyze who’s writing a certain piece of content and the goal behind it is critical to a more stable democracy, he argues.

“The quality of information is to civic intelligence what clean air and clean water are to public health,” Wineburg adds.

Instilling in college students best practices on the internet are professors at Stony Brook University’s Center for News Literacy.

Howard Schneider, the founding dean of SBU’s School of Journalism, said the course has been taught to more than 10,000 students on campus and has been adopted by 20 other universities in the United States and abroad.

“When we started the school in 2006 we said it was no longer sufficient for journalism schools just to train journalists,” Schneider tells the Press. “Given all of the changes in the way we get information, and the way it spreads, and the fact that we’re all now publishers and have the ability to basically publish anything we want and spread it, we have to train the audience.”

“The fake news phenomenon…is the latest manifestation of how the communications revolution is making it even more challenging for consumers to find reliable information,” he says.

One of the first techniques the center teaches is to slow down the way in which students process information.

“It’s getting faster and faster,” he said of the 24/7 news cycle, “and it’s a mix of real journalism and fake journalism and rumor and propaganda and infotainment and advertising and native advertising, so the first rule is stop.”

“The second thing is to ask some questions, and these are obvious,” Schneider continues. “In a way you have to think like a journalist thinks: How do you know what I’m saying is true? What’s the evidence? The more outrageous the story the higher the bar should be before you trust or share anything. Check whether the story supports the headline. When you see a headline, read the story, don’t just only read headlines.

“Always ask…who are the sources in the story? How do I know that these are trusted sources? How do I know where it comes from? And on social media you’ve got a whole other world you have to worry about.”

The good news is help is on its way. SBU’s Center for News Literacy is launching a Massive Open Online Course (MOOC) globally on Jan. 7. The course will be taught in three languages—English, Spanish and Chinese—and is completely free. (Students that want a certificate will have to pay for one.)

This will give consumers of digital content the opportunity to learn how to weed out fact from fiction, news from opinion, and propaganda from scientifically backed research.

Learning the ways of this post-truth Internet could potentially better equip readers the next time a story with a flashy headline rolls across the screen.

“Mark Zuckerberg cannot save us,” says Wineburg. “The genie is out of the bottle. What used to be the responsibility” of journalists and editors “now falls on the shoulders of anybody who owns a smartphone. So Zuckerberg will come up with a bot that allows us to indicate wobbly content, there’s going to be an enterprising group of Macedonian teenagers who figure out a way to circumvent it.

“What we have to do, is we have to endow ordinary citizens who learn about the world through a digital device to become more thoughtful about evaluating that digital content.”